Databases are a core component in lot of applications and websites. Almost everything is stored in databases. Let’s take a standard e-commerce website, we can find in databases a lot of business critical information: about customers (PII), articles, prices, stocks, payment (PCI), orders, logs, sessions, etc. Like any component of an IT infrastructure, databases must be properly monitored from a security point of view. There are often an Achille’s heel due to security issues. Common problems are a lack of access control on the SQL commands allowed or bad passwords. All databases have mechanisms to log events related to sessions (login, logout) or system but what about the detection of unauthorized modifications of data stored in tables? Those can compromise the database integrity. How to implement such controls with a very common database server (MySQL)?

Databases are a core component in lot of applications and websites. Almost everything is stored in databases. Let’s take a standard e-commerce website, we can find in databases a lot of business critical information: about customers (PII), articles, prices, stocks, payment (PCI), orders, logs, sessions, etc. Like any component of an IT infrastructure, databases must be properly monitored from a security point of view. There are often an Achille’s heel due to security issues. Common problems are a lack of access control on the SQL commands allowed or bad passwords. All databases have mechanisms to log events related to sessions (login, logout) or system but what about the detection of unauthorized modifications of data stored in tables? Those can compromise the database integrity. How to implement such controls with a very common database server (MySQL)?

Of course, MySQL already implements some logging features, configured via your my.cnf file or the command line. There are five types of logs available in MySQL:

- Error log: contains the events related to the MySQL daemon

- General query log: contains the client connection and queries

- Binary log: contains the changes applied to data (for rollback & replication)

- Relay log: contains the changes performed during replication

- Slow query log: contains the queries that took more time than expected

The most important log is the query log but it is a “performance killer“: all logged queries may use a lot of resources (CPU, storage) and the amount of events to process could be a pain to process.

Basically, I would like to monitor all changes performed on a specific table called “users” which contains the credentials of all users allowed to use an application. From a SQL point of view, three commands must be monitored: “INSERT”, “UPDATE” and “DELETE”. Of course, changes will be reported by OSSEC (why change a winning team?).

MySQL has two interesting features which be very helpful to achieve this:

- Triggers – From the MySQL website, a trigger is defined as is “a named database object that is associated with a table, and that activates when a particular event occurs for the table“. An example of trigger is copying all new inserted records in table “A” to table “B” for backup purpose.

- UDF (“User Defined Functions“) – UDF is a powerful way to extend the features of a MySQL server. New functions are added via shared libraries (example of UDF: statistical functions, strings manipulations). There is an online repository of free UDS called mysqludf.org.

Triggers will be defined on the table(s) we need to monitor. One trigger per command which could alter the data integrity: insert, update and delete. As I’m not a SQL expert, I found an interesting paper from Peter Brawley about “Transaction time validity in MySQL” (link). In his paper, Peter explains how to keep an audit trail of the changes performed on a table by inserting new rows in a “log” table each time a change is performed on the main one. But, there is a major issue: our changes are logged into MySQL and OSSEC cannot directly read a MySQL table. My first idea was to use an active-response script to dump the data but it looked too much resources consuming. On the other side, OSSEC is a king to parse text files. Unfortunately, new problem, a query like “SELECT … INTO OUTFILE xxx” does not work from triggers and the output file cannot already exists for security reasons.

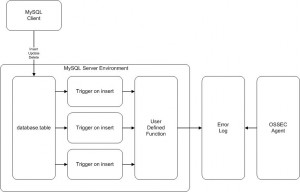

Another way to interact with the file system is by using UDF. The mysqludf.org site has very interesting stuff. Let’s have a look at lib_mysqludf_log which does exactly what we need: It creates a new function called log_error() which writes the string passed as argument into the MySQL error log. OSSEC could now access all relevant information via this file. One picture is worth a thousand words, here is how the integrity checks will be performed:

Let’s implement the whole stuff…

First, install the lib_mysqludf_log.so shared library (Note that the MySQL development environment is required):

# cd /tmp # wget http://www.mysqludf.org/lib_mysqludf_log/lib_mysqludf_log_0.0.2.tar.gz # tar xzvf lib_mysqludf_log_0.0.2.tar.gz # gcc -I/usr/include/mysql -shared -fPIC -o lib_mysqludf_log.so lib_mysqludf_log.c # cp lib_mysqludf_log.so /usr/lib/mysql/plugin # service mysql restart

Once done, enable the new function (this must be performed for each database you would like to monitor):

# mysql -u user -p

mysql> create database acme;

mysql> use acme;

mysql> create function lib_mysqludf_log_info

-> returns string soname 'lib_mysqludf_log.so';

mysql> create function log_error

-> returns string soname 'lib_mysqludf_log.so';

To test, launch a ‘tail -f errors.log’ in a shell, and send the following query to your MySQL server:

mysql> use acme;

mysql> select log_error("foobar");

+---------------------+

| log_error("foobar") |

+---------------------+

| NULLÂ Â Â Â Â Â Â Â Â Â Â Â Â Â Â |

+---------------------+

1 row in set (0.00 sec)

You should see the string “foobar” appended at the end of the errors.log. The SQL output is normal and can be simply ignored. Now, we have a simple way to write data that will be parsed by OSSEC. Let’s create some triggers. In the example below, I need to monitor the table containing user credentials (to access a web application). Any change which could alter the table integrity must be reported. First, we create the table ‘users’ then we add one trigger per action.

We also need to create a fake table (called “dummy” in the example below) because the triggers need to execute a fake insert query. This table will always remains empty. Alternatively, you can use an existing table.

# mysql -u root -p acme

mysql> create table users (

-> id int primary key auto_increment,

-> login char(20) not null,

-> password char(20) not null);

mysql> create table dummy (

-> fake char(1) not null);

mysql> create trigger users_insert after insert on users

-> for each row

-> insert into dummy values(

-> log_error(concat(now()," Table: acme.users: insert(",

-> NEW.id,",",NEW.login,",",NEW.password,") by ",user())));

mysql> create trigger users_delete after update on users

-> for each row

-> insert into dummy values(

-> log_error(concat(now()," Table: acme.users: update(",

-> NEW.id,",",NEW.login,",",NEW.password,") by ", user())));

mysql> create trigger users_delete after delete on users

-> for each row

-> insert into dummy values(

-> log_error(concat(now()," Table: acme.users: delete(",

-> OLD.id,",",OLD.login,",",OLD.password,") by ", user())));

Our newly implemented function log_error() is very basic and does not allow to format the string with variables. We have to use the concat() SQL function to build a single string containing the original fields as well as interesting information like a timestamp and the logged-in user. From a security point of view, the fields are passed directly to the log_error() function without further checks! Don’t forget that all data stored in a database must be properly sanitized by the application. It’s time to test:

# mysql -u root -p acme

mysql> insert into users values(7, "xavier", encrypt("password"));

mysql> update users set password=encrypt("newpassword") where id=7;

mysql> delete from users where id=7;

The results will be immediately written in the MySQL errors.log:

# tail -f errors.log 2011-01-07 16:38:30 Table: acme.users: insert(7,xavier,zZDDIZ0NOlPzw) by admin@localhost 2011-01-07 16:38:35 Table: acme.users: update(7,xavier,RjdqndSkxdjeX) by admin@localhost 2011-01-07 16:38:53 Table: acme.users: delete(7,xavier,RjdqndSkxdjeX) by admin@localhost

The last step is to configure your OSSEC server/agent to handle the new events. Create a new rule in your local_rules.xml and configure your server/agent to process the errors.log file:

<!-- MySQL Integrity check -->

<rule id="100025" level="7">

<regex>^\d\d\d\d-\d\d-\d\d \d\d:\d\d:\d\d Table: \.</regex>

<description>MySQL users table updated</description>

</rule>

<localfile>

<log_format>syslog</log_format>

<location>/var/lib/mysql/errors.log</location>

</localfile>

And the final result, an OSSEC notification:

OSSEC HIDS Notification. 2011 Jan 08 00:31:24 Received From: (xxxxx) xx.xxx.xxx.xxx->/var/lib/mysql/errors.log Rule: 100025 fired (level 7) -> "MySQL users table updated" Portion of the log(s): 2011-01-08 00:31:24 Table: acme.users: insert(8,brian,qavXvxlEVykwm) by admin@localhost --END OF NOTIFICATION

This is a simple example of data changes audit in databases. Remember that auditing database transactions has a huge impact on performance and is not practical. This method does not impact your server performances and do not pollute your logs. As usual, this must be seen as a proof-of-concept. Comments are welcome!

I never used Windows as a MySQL server. But it looks that UDF support is also available on this platform.

Have a look here: https://dev.mysql.com/doc/refman/5.7/en/udf-compiling.html

Good luck!

Hello,

Nice one your solution sounds really good. However I am having some issues implementing this on mysql database hosted on my windows 10 OS. How do I go about fully implementing this solution on my windows platform.

I am stuck especially at the First stage, installing the lib_mysqludf_log.so onto the shared library on my windows platform.

Kindly provide me with information or direct me to right source to get this solution fully implemented onto my platform

Hello Andrew,

Thank you for the feedback and the mention in your article, really appreciated!

Hi Xavier,

Thanks very much much for a brilliant article. I was looking into this same thing recently when I cam across your post. I hope you don’t mind but I put a link in to my blog post back to this article.

blogpost

Kind regards,

Andy

Hi,

Extraordinary work !!

Brilliant. Using OSSEC was a nice idea, and you’re doing it well.

One of the most valuable post i rode on defensive part.

This post on db, plus other security events on USB (detect of keys inserted), psexec presence (particular windows logs events sent by OSSEC windows agent) can on my point greatly improve the security domain.

Thanks again.

++$

Hello sha8e,

No you don’t need to start a new database from zero. You can add/remove triggers as well as the UDF on any existing database!

The UDF must be enabled on _all_ the database you’d like to audit. This can be done on any existing DB. But, warning, the UDF is available once the shared library has been loaded (which requires a MySQL daemon restart)!

Cheers,

Xavier

Hi Xavier,

I really enjoyed reading every single line. Do you think it is possible to add this solution to an already exist Database? Or do we have to build the DB tables from scratch in order to add the logging feature to it? Because as you know some systems when you upgrade them might go insane if we try to add the logging field manually to the existed table.

Thanks,

Hi Mickael,

Thank you for the feedback. Really appreciated.

Indeed, there is a lack of easy and standardized SQL databases control.

Your OSSEC posts are top-notch! Very well done. I am working on Oracle support for OSSEC right now and was surprised at how scattered some of the information about how to audit particular actions is. I think we need a standardized way to support new applications (e.g. all new database support should include schema changes, add/remove users, privilege changes, etc.)