Tonight the first Belgium OWASP chapter meeting of the year 2015 was organized in Leuven. Next to the SecAppDev event also organised in Belgium last week, many nice speakers were present in Belgium. It was a good opportunity to ask them to present a talk at a chapter meeting. As usual, Seba opened the event and reviewed the latest OWASP Belgium news before giving the word to the speakers.

Tonight the first Belgium OWASP chapter meeting of the year 2015 was organized in Leuven. Next to the SecAppDev event also organised in Belgium last week, many nice speakers were present in Belgium. It was a good opportunity to ask them to present a talk at a chapter meeting. As usual, Seba opened the event and reviewed the latest OWASP Belgium news before giving the word to the speakers.

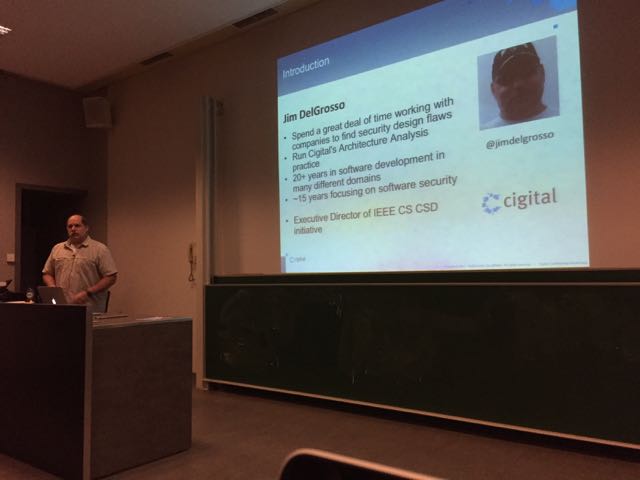

The first speaker was Jim DelGrosso from Cigital. Jim talked about “Why code review and pentests are not enough?â€. His key message was the following: penetration tests are useful but they can’t find all types of vulnerabilities. That’s why other checks are required. So how to improve our security tests? Before conducting a penetration test, a good idea is just to check the design of the target application and some flaws can already be found! At this point, it is very important to make a difference between a “bug†and a “flawâ€. Bugs are related to implementation and flaws are “by designâ€. The ratio between bugs and flaws is almost 50/50. Jim reviewed some examples of bugs: XSS or buffer overflows are nice ones. To resume, a bug is related to “coding problemsâ€. And the flaws? Examples are weak, missing or wrong security controls (ex: if a security feature can be bypassed by the user). But practically, how to find them? Are they tools available? To find bugs, the classic code review process is used (we look at patterns). Pentests can also find bugs but overlaps with findings flaws. Finally, a good analysis of the architecture will focus on flows. Jim reviewed more examples just to be sure that the audience made the difference between the two problems:

- LDAP injection: Bug

- 2-factors authentication bypass: Flaw

- Altered log files: Flaw

- Writing sensitive data to logs: Bug

- Log injection: Bug

- Hardcoded encryption key in the source code: Bug & Flow (both)

Then Jim asked the question: “How are we doing?†regarding software security. The OWASP Top-10 is a good reference for almost ten years now for most of us. Jim compared the different versions across years and demonstrated that the same attacks remain but their severity level change regularly. Also, seven out of them have been the same for ten years! Does it mean that they are very hard to solve? Do we need new tools? Some vulnerabilities dropped or disappeared because developers use today’s frameworks which are more protected. Others are properly detected and blocked. A good example are XSS attacks blocked by modern browsers. Something new raised in 2013: The usage of components with known vulnerabilities (dependencies in apps).

So practically, how to find flaws? Jim recommends to perform code review. Penetration tests will find less flaws and will require more time. But we need something else: A new type of analysis focusing on how we design a system and a different set of checklists. That’s why the IEEE Computer Society started a project to expand their presence in security. They started with an initial group of contributors and built a list of points to avoid the classic top-10 security flaws:

- Earn or give, but never trust or assume

- Use an authentication mechanism that cannot be bypassed

- Authorise after authenticate

- Separate data and controls instructions

- Define an approach that ensure all data are explicitly validated

- Use cryptography correctly

- Identify sensitive data

- Always consider the users

- Understand how integrating external components changes the attack surface

- Be flexible with future changes

Heartbleed is a nice example to demonstrate how integrating external components may increase your surface attack. In this case, the openssl library is used to implement new features (cryptography) but also introduced a bug. To conclude his presentation, Jim explained three ways to find flaws:

- Dependency analysis: Software is built on top of layers of other piece of software. Vulnerabilities in open source projects. Weak security controls provided by the used framework or framework features that must be disabled or configured to their secure form.

- Known attack analysis: understanding attacks is important to know what controls are needed to prevent them. Client-side trust, XSS, CSRF, logging and auditing, click-jacking, session management.

- System specific analysis: weakness is a custom protocol, reusing authentication credentials or not following good design principles.

How to deploy the honeypot? Aurélien explained that 500 vulnerable websites were deployed on the Internet using 100 domains registered with five subdomains each. They were hosted on nice of the biggest hosting providers. Each websites had five common CMS with classic vulnerabilities. Once deployed, the data collection occurred for 100 days. Each website acted as a proxy and its traffic was redirected to the real web apps running on virtual machines. Why? It’s easy to reinstall, they allow full logging and it’s easy to tailor and limit the attackers privileges. About the collection data, it was impressive:

- 10GB of raw HTTP requests

- An average of 2h10 after the deployment to get compromised by automatic tools (bot)

- And 4h30 for manual attacks

- Most attackers? USA, Russia and Ukraine (65% of all requests).

Aurélien gave some facts about the different phases of an attack:

- Discovery: 70% of attacks starts with a bot… In 84%, the attack is started by a second automatic tool. 50% of the requests had a referrer.

- Reconnaissance: By crawlers, they mimic classic UA from well known search engines

- Exploitation: 444 distinct exploitation sessions (75% rely on perl – they like perl :-), web-shell, phishing files,

- Post exploit: Â 8500 interactive sessions collected (web-shells), 5 mins avg. 61% uploaded a file, 50% (tried to) modify file and 13% made a defacement.

Based on the statistics, some trends were confirmed:

- Strong presence of Eastern Europe

- African countries: spam & phishing campaigns

- Pharma ads : most common spam

The second part of the presentation focused on hosting providers. Do they complain? How do they detect malicious activity (if they detect it)? Do they care about security? Today hosting solutions are cheap, there are millions of websites maintained by inexperienced owners. This make the attack surface very large. Hosting providers should play a key role in help users. Is it the case? Hélas, according to Aurélien, no! To perform the tests, EURECOM registered multiple shared hosting accounts at multiple providers, they deployed web apps and simulated attacks:

- Infection by botanist

- Data exfiltration (SQLi)

- Phishing sites

- Code inclusions

- Upload of malicious files

- At registration time, some did some screening (like phone calls), some verified the provided data and only three performed a 1-click registration (no check at all).

- Some have URL blacklisting in place.

- Filtering occurs at OS level (ex: to prevent callbacks on suspicious ports) but the detection rate is low in general.

- About the abuse reports: 50% never replied, amongst the others, 64% replied in one day. Wide variety of reactions

- Some providers offers (read: sell) security add-ons. Five out of six did not detect anything. One detected but never notified the customer.

Stupid spellchecker! 🙂

“Why code review and penitents are not enough?â€

I’m pretty certain penitence is not enough 🙂 Very nice write-up though!

Aris

RT @xme: [/dev/random] OWASP Belgium Chapter Meeting February 2015 Wrap-Up http://t.co/S6f2Zq1z77

RT @xme: [/dev/random] OWASP Belgium Chapter Meeting February 2015 Wrap-Up http://t.co/S6f2Zq1z77

RT @xme: [/dev/random] OWASP Belgium Chapter Meeting February 2015 Wrap-Up http://t.co/S6f2Zq1z77