Here is a quick wrap-up of the first OWASP Belgium Chapter meeting of 2013 organised today in Leuven. SecAppDev is running this week so it was a good opportunity to bring some trainers for an evening meet up: Yves Younan and Steven Murdoch. Lieven, from the OWASP team, made a small review of the current Belgium chapter & projects. The room was full of (new) people. There was so many attendees that the organisers had to do a last minute switch to a bigger room! That’s very good, seeing old friends is always nice but new faces are always welcome. OWASP has so many important messages to broadcast to people. If you never attended such event, please do the next time… and its free!

The first speaker, Yves, is Security Researcher at SourceFire and talked about “25 years of vulnerabilities“. To perform this research, Yves had a look at main vulnerabilities databases like CVE & NVD. The goal was to build an overview of the vulnerabilities reported during the past years and, based on that, if we could expect some trends for the coming years. Since vulnerabilities are indexed (in 1988), 54.000 vulnerabilities have been reported. Some statistics were give by Yves based on two level of criticity: the serious vulnerabilities (CVSS >= 7) and the critical ones (CVSS = 10). This scoring is based on multiple factors like remotely exploitable, affecting the data integrity, availability, etc. Note that if not enough data is provided, the vulnerability will be by default classified as critical. This is a safe behaviour, if you don’t know your enemy, expect the worst. Since 1988, there was clearly a trend as seen in the picture below but less vulnerabilities were tagged as “serious” (33% in 2012). 9.16% have been tagged as “critical” in 2012. Vulnerabilities are classified by types:

- Authentication

- Credential management

- Access control

- Buffer errors (overflow)

- CRSF

- XSS

- …

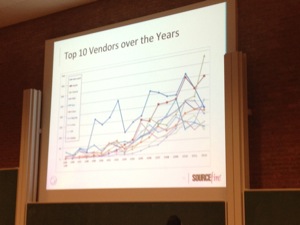

Most important (in terms of occurrence) were buffer overflow, XSS and Access control. Top-3 serious vulnerabilities: Buffer overflow, SQL injection & code injection. For critical vulnerabilities: Buffer overflow, “not-enough-info” and access control. And what about our best friends, the security vendors? Top-10 vendors account for 14K vulnerabilities but we must keep in mind that some vendors have a lot of products in their catalog. The top-3 in numbers was Microsoft, Apple & Oracle. Serious top-3 was: Microsoft, Apple, Cisco and critical was: HP, IBM & Mozilla. BTW, it’s pretty sure that Oracle will grab some positions in 2013.

About the products:

- In numbers: Firefox, MacOS X, Chrome

- Serious: Microsoft XP, Firefox, Chrome

- Critical: Firefox, Thunderbird, Seamonkey.

Note that some products share a lot of code, think about FIrefox & Thunderbird (both are developed by Mozilla). What about Linux? Redhat is the winner followed by Suse & Gentoo. And for Microsoft, winners are Windows XP, Server 2003 and Server 2000. Of course, for a few years, mobiles phones also suffer of vulnerabilities. In this scope, Apple is the winner with its iPhone which counts 81% of the mobile vulnerabilities. This looks strange because there are much more malwares for Android. Then Yves explained the methodology used to try to count 0-day vulnerabilities for Microsoft products. How? If a CVE is published before a Microsoft Security Bulletin, this can be considered as a 0-day. Results? In most cases, Microsoft communicates before a CVE being assigned. Only 13% could be considered as 0-days vulnerabilities.

And what is the situation today? (statistics on a period from 1st January to 14th February) The type “not-enough-info” comes in first place. Buffer overflows remain in 2nd position. And who’s the top vendor? Guess who? Oracle of course with the multiple Java vulnerabilities reported in the last weeks. Finally, Yves tried to give some prediction about the future. For him, buffer overflows will remain a very important type of vulnerability. Access control and privileges issues will grow. At vendors level, Oracle will remain in 1st position and Google will probably enter the top-10.

Some conclusions to this research? Fewer vulnerabilities were reported in 2012 but the percentage  of critical ones increased by the next two years, so the trend will continue! If you would like to read more about this topic, the full report is available here. The talk was not technical and was only based on vulnerability databases. I would expect more facts.  Usually, I don’t have a lot of time to read such reports with plenty of statistics and this presentation was a great opportunity to review the report content. Maybe a last tip: Check out regularly sites like CVE, NVD or OSVDB to get updated with new vulnerabilities.

After a small break, Steven, Senior Researcher at University of Cambridge, talked about a hot topic: the security in banking applications. In UK, “Chip & Pin” is available for five years now (based on the EMV standard). It’s convenient: the user put his card in a reader and give his pin. UK was a very early adaptor (2006) of this system. The goal of EMV was to reduce drastically the fraud. Did it succeed? This is not sure. Steven reviewed some statistics about fraud and some types even grew like counterfeit fraud. Techniques exploit backwards compatibility issues. Indeed, the old magstrip can still be used as a “failover” because upgrade to Chip & Pin was very complex and expensive to be performed in one step!

Counterfeit fraud increased again after the deployment of EMV. It was easier to collect PIN at POS instead of ATM. Attackers try to find the weakest link. Online banking started in 2009 and is growing. The responabilitiy of some fraud shifted from the merchant to the customer. Another fact: PoS (“Point of Sale“) terminals are difficult to harden compared to regular ATM. Steven gave deep information about the vulnerability discovered by his University.

Then he talked about the “no-PIN attack“: It allows criminals to use a stolen card without knowing the PIN. To achieve this, you need a device between the genuine card and the reader. This is some kind of MiTM attack. A demo was even performed for the UK television:

This was three years ago! And today, what’s the situation? Well, according to Steven, nothing changed a lot. Cards issued by  some banks work and others not. Why was this attack possible? Because EMV is complex, it uses a bad design of flags exchanged between the card/reader and implementation has problems. For the banks, it’s just a matter of risks: based on the number of transactions, banks could take the risk to face some fraudulent events. Finally, the latest type of fraud which is still growing in UK was reviewed: Phishing & key loggers. Steven presented the different types of devices/controls used to authorise the transactions like more or less complex CPATCHA’s, TAN or DigiPass but most of them have also issues.

Steven’s conclusion: EMV systems are open to a variety of attacks. Their complexity is problematic. There is a lack of resistance measures implemented and customers are still left liable. Today for online banking, transaction authentication is essential which requires a trustworthy display. The research is available here. Compared to the first one, this presentation was very technical. Maybe a little too much for me who has no experience in this field.

@xme Thanks, nice wrap-up of yesterday!

@Tom

My slides are here, and will be on the OWASP site soon: http://www.cl.cam.ac.uk/~sjm217/talks/owasp13bankingsecurity.pdf

RT @xme: [/dev/random] OWASP Belgium Chapter Wrap-Up March 2013 http://t.co/9oyY985I4b

Nothing published (yet?) on the OWASP website. I suppose it will be uploaded soon. Otherwise, contact the speaker directly.

RT @xme: [/dev/random] OWASP Belgium Chapter Wrap-Up March 2013 http://t.co/9oyY985I4b

RT @xme: [/dev/random] OWASP Belgium Chapter Wrap-Up March 2013 http://t.co/9oyY985I4b

Any idea if Dr. Murdoch will post an updated version of his presentation online? The one posted at http://www.cl.cam.ac.uk/~sjm217/talks/#talk-ches12bankingsecurity doesn’t seem to have the ‘online banking security’ part he spoke of last night. 🙂

RT @xme: [/dev/random] OWASP Belgium Chapter Wrap-Up March 2013 http://t.co/9oyY985I4b

RT @xme: [/dev/random] OWASP Belgium Chapter Wrap-Up March 2013 http://t.co/9oyY985I4b