The holidays are gone, kids are back to school. For the security landscape, it means that security meetings are also back! The first OWASP Belgium Chapter was organised tonight. Here is my quick wrap-up.

This time the meeting started in the afternoon with a technical workshop organised by SPION. Due to agenda conflicts, I did not attend this one. I joined the meeting for the second part organised in a classic format: after a brief introduction with news about the Chapter and the OWASP foundation in general, two speakers came to present their researches.

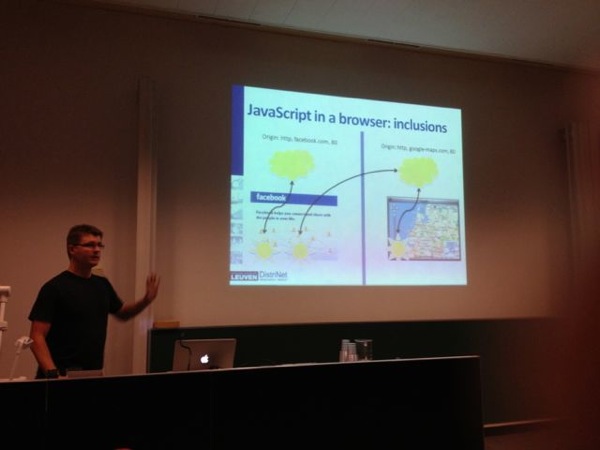

The first one was Steven Van Acker who talked about remote JavaScript inclusions. They are plenty of publicly available JavaScript libraries on the Internet. It’s very easy for developers to do some shopping and use them without reinventing the wheel. Steven presented the results of a research about  the usage of those libraries in websites. Is it really safe to use them “as is“? Always keep in mind that browsers don’t care about what they execute. A crawler was developed to download websites content from the Internet (approximatively 3.3M URLS where visited) and included JavaScript content was extracted. Steven gave some statistics. The one which hit me was about the top-10 of JavaScript code used: 50% of this top-10 is related to Google services! (mainly Google Analytics) Once we saw the amount of JavaScript code included in websites, some questions arise:

- Should websites trust remote providers?

- Can we safely execute their code?

- What’s the quality of their maintenance?

Then, again based on the finding, some weirdness:

- Cross-user scripting (ex: http://localhost/script.js)

- Cross-network scripting (ex: http://192.168.2.1/script.js)

- Stale IP-based remote inclusions

- State domain-based remote inclusions

- Typo-squatting XSS

- Executing the remote scripts in a sandbox (not always easy).

- Download the script locally.

- shodanhq.com

- serversniff.net

- robtex.com (with a good domain visualisation feature)

- Google advanced searches (intent:, inurl:, filetype:, etc)

- Google Hacking DB

- Search engine optimisation tools (can crawl target websites for you)

- FOCA

- Maltego

Most of them are classic ones. But that was a good reminder or a good way to populate your bookmarks! That was a good meeting to start the new season!