The second day is over. Here is my daily wrap-up. Today was a national strike day in France and a lot of problems were expected with public transports. However, the organization provided buses to help attendees to travel between the city center and the venue. Great service as always 😉

After some coffee, the day started with another TLP:AMBER talk: “Preinstalled Gems on Cheap Mobile Phones†by Laura Guevara. So, to respect the TLP, nothing will be disclosed. Just keep in mind that if you use cheap Android smartphones, you get what you paid for…

Then, Brian Carter @

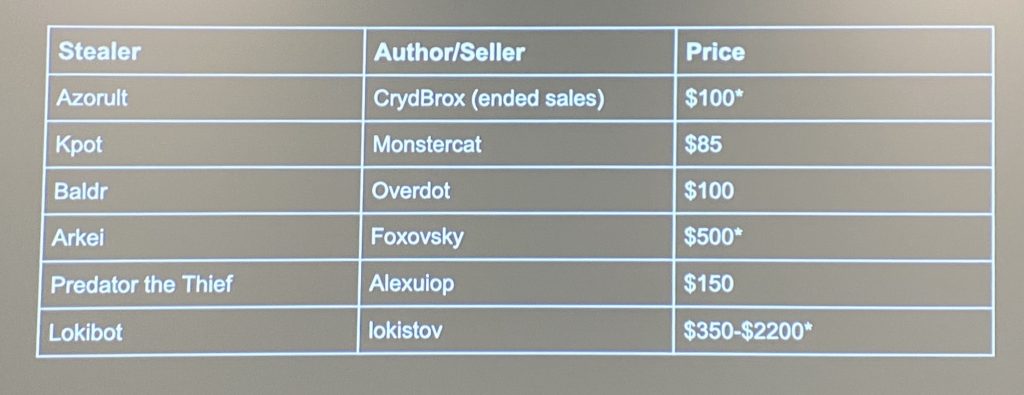

presented “Honor Among Thieves: How Stealer Malware Fuels an Underground Economy of Compromised Accountsâ€. It started with facts: Attackers make mistakes and leak online information like panels, configuration files or logs. You can find this information just via a browser, no need to hack them back.

Brian’s research goals were:

- Understand the value of stolen data

- Develop tools to collect, parse data

- Collect info help identify malware families based on C2.

- Develop detection rules to catch them

But, very important, we cannot commit crimes, not contribute to malicious activities or harm victims of malware. The golden rule here is: Be ethic! Research had also limitations, it’s impossible to collect everything and data may not be representative! What was the collection process? Terabytes of data, 1M stealer logs archives, many panels, builders, chat logs, forum posts, and market listings. Again, “stolen data†were collected from ethical sources like VT, shares, open directories. Brian also commented on the economics of stealers: The market is relatively small today and only a few criminals are successful in their business and stealer malware is low-volume. Here is an example of prices:

Then, when you collected so much data, what to do with a huge amount of data? Warn users if they are compromized, research adversaries, develop countermeasures, take down? This was an interesting talk.

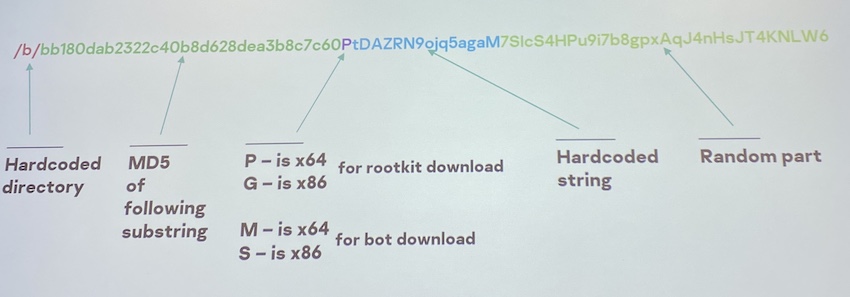

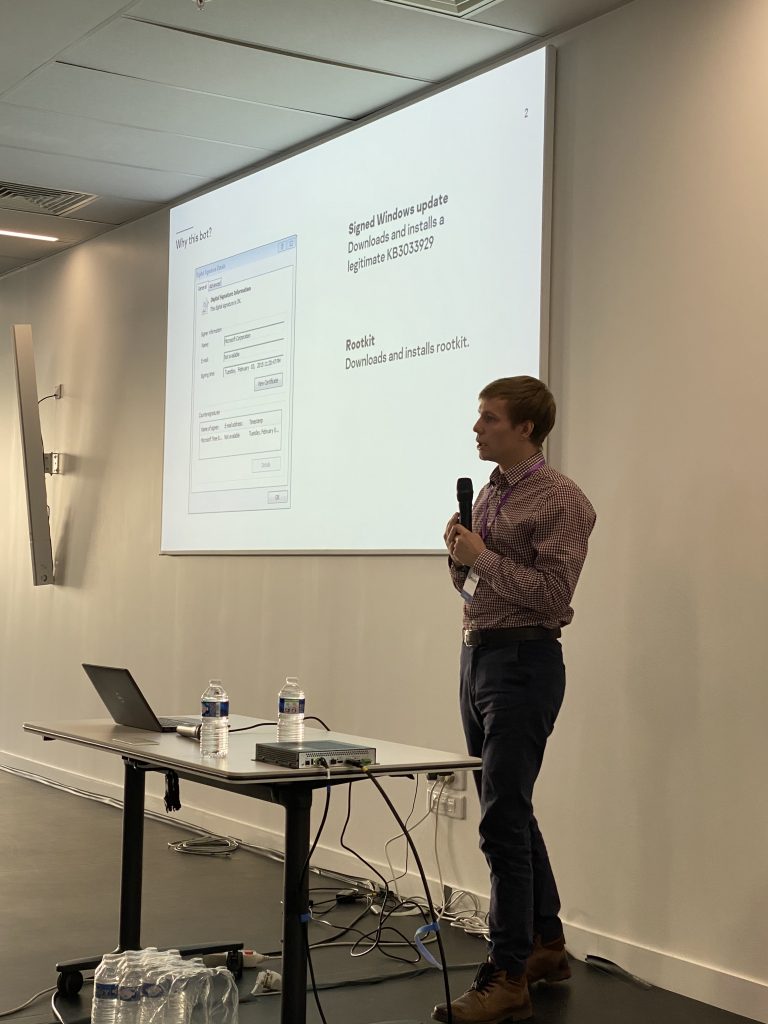

The next speakers were Alexander Eremin and Alexey Shulmin who presented “Bot with Rootkit: Update and Mine!â€. The research started with a “nice†sample that installed a Microsoft patch. Cool isn’t? Of course, the dropper was obfuscated and, once decoded, it used the classic VirtualAlloc() and GetModuleHandleA() to decrypt the data.

When they were able to extract strings, they continued to investigate and discovered interesting behaviors. The first one, the develop was nice enough to leave debugging comments via a write_to_log() function. The malware checked the OS version, the keyboard layout and created a MUTEX. Until now, nothing fancy, but the malware also installed a Microsoft patch, if not already installed: KB3033929. Why? The patch was required to allow check_crypt32_version() and support of SHA-2! Then, they explained how C2 communications are performed, based on HTTP and encrypted using RC4 and Base64 encoding. Then, a rootkit is installed to finally deploy a cryptominer. The rootkit is like Necurs and uses IOCTL-like registry keys. Example of commands supported:

- 0x220004 – update rootkit

- 0x220008 – update payload

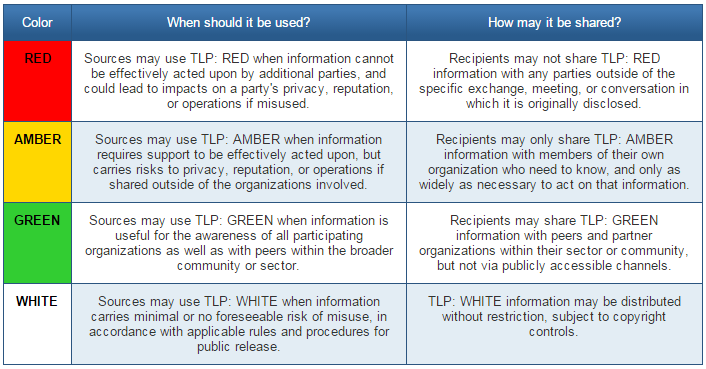

After a caffeine refill, it was the turn of Tom Ueltschi who presented “DESKTOP-Group – Tracking a Persistent Threat Group (using Email Headers)â€. Tom is a regular speaker at Botconf and always provides good content. This talk was tagged as TLP:GREEN. Good idea: he will try to make a “white†version of his slides as soon as possible. Remember that TLP:GREEN means “Limited disclosure, restricted to the community.â€

Before the lunch break, two short presentations were scheduled. “The Bagsu Banker Case†by Benoît Ancel. Sorry, TLP:AMBER again.

The following talk was “Tracking Samples on a Budget†by <redacted>. It covered a personal project running for two years now which explained how to acquire a collection of malware samples… but being a student, with no budget! What are the (free) sources available? Open-source repositories, honeypots, pivots, feed, sandboxes, existing malware zoo like malshare or malc0de, etc. You can run also your own honeypot but it’s difficult to deploy a lot of them. The talk covered the components put in place to crawl the web, how to avoid some stupid limitations that you’ll face. Then comes the post-processing:

- Upload new samples to VT

- Enrichment (metadata, strings, …)

- YARA rules!

- Pivots

A good idea is also to search recursively for open directories that remain a goldmine. Here is the sample tracking lifecycle as implemented:

Acquire URL > Crawl > Download > Postprocessing > Store > Pivot

What about the results? Running for 2 years, 270K unique samples have been discovered, 25% of them not on VT, 600GB of data collected, 78% of the samples have 5+ score on VT. I had the opportunity to talk later with the speaker, it’s a great project… The code is running fine for 2 years and just does the job! Amazing project!

After the lunch, we had another restricted presentation (sorry, interesting content can’t be disclosed) – TLP:RED – by Thomas Dubier and Christophe Rieunier: “Botnet Tracking Story: from Spam Mail to Money Launderingâ€.

The next talk was “Finding Neutrino Botnet: from Web Scans to Botnet Architecture” by Kirill Shipulin and Alexey Goncharov. This research started with some interesting hits on a honeypot. They adapted the honeypot to respond positively and mimick a webshell. The scan was brute-forcing different webshells with a strange command: “die(md5(Ch3ck1ng))”. The malware they found had a classic behavior: check if already installed, exfiltrate system information and download/execute a payload (a cryptominer in this case). It implemented persistence via WMI, was fileless and also killed its competitors.

The next malware to be analyzed was BackSwap: “BackSwap Malware Campaign Evolution” by Carlos Rubio Ricote and David Pastor Sanz. This malware was found by eSet in May 2018 via trojanized apps (OllyDbg, Filezilla, ZoomIt, …). It used PIC – Position Independent Code and used shellcodes hidden in BMP images (see the DKMC tool). They explained how the malware worked, how the configuration was encrypted and, once decoded, what were the parameters like the statistics URL, the C2, User-Agent touse, and the injection delimiter. They decrypted the web inject and explained the technique used: via the navigation bar or the developer console. Then, they reviewed some discovered campaigns targetting banks in Poland, Spain.

After the afternoon coffee break, Mathieu Tartare came on stage to talk about “Winnti Arsenal: Brand-new Supplies“. WINNIT is a group that is often cited in the news and that compromized multiple organizations (telco, editors, healthcare, gambling, etc…) They are specialized in supply-chain attacks. They look to be active since 2013 (first public report) and in 2017… there was the famous CCleaner case! In 2018, the gaming industry was compromised. The 1st part of this talk covered this. The technique used by the malware was CRT patching. A function is called to unpack/execute the malicious code before returning to the original code. The payload was packed using a custom packer using RC4. The first stage is a downloader that gets its C2 config then gathers information about the victim’s computer. Amongst them, the C: drive name and volume ID are exfiltrated. The 2nd stage will decrypt a payload that was encrypted using… the volume ID! Finally, a cryptominer is installed. Mathieu also covered two backdoors: PortReuse that works like a good old port-knocking. It sniffs the network traffic and waits for a specific magic packet. The second one was ShadowPad.

The last talk for today was “DFIR & Crisis Management – Post-mortems & Lessons Learned in the Pain from the Field” by Vincent Nguyen. He was involved in many security incidents and explained some interesting facts about them. How they worked, what they found (or not 😉 and also, very important, lessons learned to improve the IR process.

The scheduled ended with a set of lightning talks. 19 talks of 3 mins with lot of interesting information, tools, stories, etc.