I’m back from the last OWASP Belgium chapter meeting. Here is a quick wrap-up. Classic scenario, the event started with Seba who gave some updates about the OWASP foundation. Today’s event was part of a bigger one called the OWASP European Tour. During a few weeks, all European chapters organise a local event . There is also a CTF organised. Some interesting new projects where highlighted:

If you’re not an OWASP member yet, there is currently a cool action ongoing called “Membership drive“. Have a look at it. That’s the news for this time. Now, let’s review the scheduled talks.

The first speaker was Eoin Keary: “Needles in haystacks, we are not solving the app sec problem & html hacking the browser, CSP is dead“. Today it’s easy to find good examples of data breaches in your country. They can be used to demonstrate to your boss that security is more and more important. Why is it more easy to find examples? Due to the increasing amount of attacks (ex: from hacktivists). Most big organisations in the world have already been hacked even if they have huge security budgets. Is it normal? We spend a lot of money into security but the cost of security incidents increases too (huge business impact). “There’s money in them there is web apps” said Eoin. Every application is unique and therefore is not properly secured. Compared to networks which are more easy to configure, everybody talks TCP/IP and uses ports. Just put a firewall and you’re (almost) done. You can’t do the same with web apps. First Eoin’s conclusion is that we are doing it wrong. Nothing new but what is the problem?

According to Eoin, we are facing an asymmetric arms race: A cycle of an annual pentest gives minimal security and a pentest does not give any warranty of results. Web applications are too complex to be covered from A to Z. Think about 50 variables which could potentially be vulnerable to x CVE’s. It’s impossible to review all the test cases on a reasonable amount of time. Pentestng can be compared to the visible part of the iceberg. A pentester has “x” days to perform the tests while an attacker has plenty of. Also, are they as good as the bad guys? How experienced are they? When new code is pushed in production, vulnerabilities might be present until the next pentest (in weeks, months, years?). Are you sure that your testing tools don’t have security flaws or bugs too? Then Eoin gave examples of vulnerabilities that cannot be discovered by tools but by human intelligence (ex: business logic). The examples were based on abusing the CSP (“Content Security Policy“):

- Single quote issue (HTML injection) quote damping?

- Form rerouting

- <base> jumping

- Element override (<input> attribute in HTML5)

- Hanging <textarea>

To resume: blackbox tests are useful to prove that applications are vulnerable but they are not the best way to secure an application (some risks remain). The only way is to perform code review. Eoin compared security with cheese burgers: they are tasty We know that they are bad for us but who cares? Â We write insecure code until we get hacked! That is called the cheeseburger approach. Excellent talk, I liked it!

The second talk was “Teaching an old dog new tricks: securing development with PMD” by Justing Clarke. For a while, tools exist to search for bugs in software code. Some perform status analysis, others perform dynamic analysis. But tools used by developers are used to catch code code quality issue and address less (or not at all) security.

The idea explained by Justin was to extend existing solutions to perform security checks too? He focused on PMD. First, what is PMD? In the audience, only one guy was using this tool. It’s an open source static analyser for Java source code. It search for lot of bug but not really related to security. Justin’s presentation was about extending PMD with self-written security checks. It’s quite easy to extend… if you are a developer. Personaly, the talk went to deep for me. Custom rules are written as Xpath expressions. With my very basic knowledge, I found this like using grep to search for some patterns in the source code.

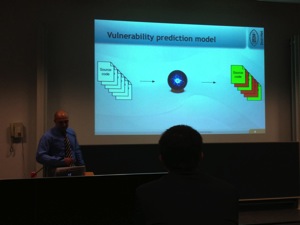

After a short break, the last guest, Aram Hoysepyan, talked about “Vulnerability prediction in Android applications“. Why? Android is an attractive target (75% of market share in Q1 2013) and the number of apps keeps growing. App security is not guaranteed by the platform provider (read: Google as example).

So, a single vulnerability could affect a large amount of users and Android apps are not yet massively scanned (compared to regular application for PC/Mac). How to find vulnerabilities?

- Code inspection

- Penetration testing / security testing

- Static code analysis

- With some “magic” – vulnerability prediction models!

That’s what is doing Aram. This work by using a “magic ball” as he defines it (machine learning). The model will not say “this line of code is dangerous” but “have a look at this file, it might be suspicious“. What are the existing tools & techniques?

- Size of a component (large components are more likely to be vulnerable)

- Fetch the features from the components

- Determine the vulnerabilities (there exists public database MFSA or National Vulne DB)

- Investigate the correlation

Some other interesting points to keep an eye on:

- Developer activity

- Number of import statements

What’s the approach used by Aram? Use the source code itself in a tokenised form. Use the token frequency as features. Static code analysis is performed using Fortify. Each file is either clean or vulnerable. Tokens and vulnerabilities are used to build a prediction model (learning machine). Experiment #1: Can we predict a future version of an app based on its first version? Experiment #2: Can we build a generalised predictor that works on all apps? Based on the results, what are the most influential features?

- Error handling

- “if” (branching – app complexity)

- Null (pointer algebra)

- Java, org (import statements)

- New, Log (others)

Conclusions: it worked quite fine for java files in android apps but they are now trying to implement the same technique with FireFox and PHP code. The presentation started with interesting ideas but slides after slides it became more complex and fuzzy. Again, I’m not a developer. That’s all for this time!

Shame on me! 😉

You forgot to mention the great sandwiches 😉

@xme Do you sometimes sleep ? 🙂