I received my Loggly beta account (thanks to them!) a few days ago and started to test this cloud service more intensively. I won’t explain again what is Loggly, I already posted an article on this service.

For me, services like Loggly are the perfect cloud examples with all the pro and cons. Smallest organizations may find here a perfect tool to analyze their logs with limited efforts and, at the opposite, there are two main issues regarding the security of your data sent to the cloud.

First, how to send them in a safe way to “the cloud” and, once this first step done, how to insure they are stored safely. “Safely” means here from a confidentiality, integrity and availability point of views. Don’t forget that your logs contain sensitive information like users, IP addresses, process names and much more! Regarding the storage of your data, there is nothing special you can do except trusting your cloud provider (if you take a paying subscription, read carefully the contract terms). You cannot send them encrypted as the application running in the cloud needs to parse and index them. But, regarding the first issue (to send them safely to Loggly), let’s see how to cancel this issue.

Even if cloud based, Loggly works like any other log management solutions and performs the following tasks:

Loggly performs the events collection using “inputs”. At the moment, the inputs support some protocols:

- Syslog UDP

- Syslog TCP

- HTTP(S)

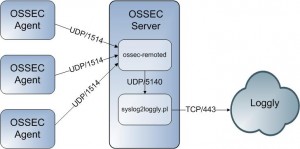

Forget the UDP protocol, clearly unreliable over the Internet. Syslog over TCP works well but lack of confidentiality. The HTTP(S) protocol is available using a simple API. But HTTP is not a common way to send events and is not really implemented in applications which often support only Syslog. To solve this problem, I wrote a small Perl script which is a simple Syslog daemon and send all the received events to Loggly via HTTPS. My example is based on an OSSEC server:

The OSSEC server has a feature to forward its alerts to a remote Syslog server via the “syslog_output” directive. In your ossec.conf file, add the following lines and restart it:

<syslog_output>

<server>127.0.0.1</server>

<port>5140</port>

</syslog_output>

The Perl script accepts the following arguments:

# ./syslog2loggly.pl -h syslog2loggly.pl -k <key> [-D] [-h] [-v] [-p port] -D     : Run as a daemon -h     : This help -k key : Your Loggly HTTP input API key (see loggly.com) -p port : Bind to port (default 5140) -v     : Increase verbosity

Log in your Loggly account and create a new HTTP input and select “HTTP” as service type. Don’t care, it supports also HTTPS. Once done, go to the newly created input configuration and Loggly will give you the API key to use to submit events:

https://logs.loggly.com/inputs/xxxxxxxx-xxxx-xxxx-xxxx-xxxxxxxxxxxx

This is the key to be passed to the Perl script using the “-k” switch. The script can be run as a daemon and forks a child to send each received event to Loggly (to avoid blocking situation in case of huge load). It retries to send the event three times with a pause of 15″ between each retry.

The script is available here. As usual, comments and feedback are welcome!

This is great, Xavier! Now if Loggly would only support OSSEC natively. 🙂

Encryption and TCP is a great step forward in making logging in the cloud successful. There are some other issues that still need to be addressed, such as data contamination, but that is not something under our control.

Hi Raffy,

Thanks for the feedback! Good to ear that Syslog over TLS is supported!

BTW, my name is Xavier (@xme) 😉

This is fantastic. Thanks Daniel!

Just an FYI, Loggly also accepts Syslog over TLS, if you have a non-free account!