I installed a new server which uses the Solaris 10 (6/06) best features: ZFS (now officially supported by Sun) and zones.

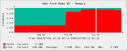

A few hours after the setup, I started the applications and data transfer. Immediately, the memory was almost full (only 300MB free) !? No big process running, nothing strange, the server operated perfectly.

A few hours after the setup, I started the applications and data transfer. Immediately, the memory was almost full (only 300MB free) !? No big process running, nothing strange, the server operated perfectly.

While googling for some help, I found an interesting sun.com blog post: The Dynamic of ZFS:

The ARC CacheThe most interesting caching occurs at the ARC layer. The ARC manages the memory used by blocks from all pools (each pool servicing many filesystems). ARC stands for Adaptive Replacement Cache and is inspired by a paper of Megiddo/Modha presented at FAST’03 Usenix conference.That ARC manages it’s data keeping a notion of Most Frequently Used (MFU) and Most Recently Use (MRU) balancing intelligently between the two. One of it’s very interesting properties is that a large scan of a file will not destroy most of the cached data.On a system with Free Memory, the ARC will grow as it starts to cache data. Under memory pressure the ARC will return some of it’s memory to the kernel until low memory conditions are relieved.We note that while ZFS has behaved rather well under ‘normal’ memory pressure, it does not appear to behave satisfactorily under swap shortage. The memory usage pattern of ZFS is very different to other filesystems such as UFS and so exposes VM layer issues in a number of corner cases. For instance, a number of kernel operations fails with ENOMEM not even attempting a reclaim operation. If they did, then ZFS would be responding by releasing some of it’s own buffers allowing the initial operation to then succeed.The fact that ZFS caches data in the kernel address space does mean that the kernel size will be bigger than when using traditional filesystems. For heavy duty usage it is recommended to use a 64-bit kernel i.e. any Sparc system or an AMD configured in 64-bit mode. Some systems that have managed in the past to run without any swap configured should probably start to configure some.

The behavior of the ARC in response to memory pressure is under review.

[Edited 10/07/2006]

I got more info from a SUN engineer:

from bugid 6359922 :

ZFS does (or at least attempts to) throttle its memory usage. Note that

it does not cache the same way that UFS does. ZFS uses an Adjustable

Replacement Cache (ARC). This cache is designed to provide optimal performance for filesystem activity. It uses a set of kmem_caches to hold data blocks and manages these blocks using MRU and MFU lists. In the absence of any other memory pressure, ZFS will consume up to 3/4 of physical memory for its cache (so 3GB on your 4GB machine). We throttle the amount of non-evictable memory in our cache, trying to keep it below 1/2 the current cache size. So at least 1/2 of the memory in the cache is always “evictable”, meaning it can be returned to the system on demand. But if no demands are made by the system, the memory will not be returned.The cache is designed to react to memory preasure by evicting cache contents and reducing its cache size. Its clear that we need to do some tuning in this area.

So in other words : the behaviour you are noticing is normal (works as designed).

You could use the following to examine the memory usage :

$ mdb -k

> ::memstat

Rgds,