The HTTP protocol has a list of response status codes to help communication between the server and the browser. Everytime a server responds to a browser request, a status code is sent. The most common ones are: “200” which means “Everything is ok, here is some food!” and “404” which means “Not found“. The second error may be caused by the client (example: an error in the URL typed in the browser) or by the developer/administrator who forgot to copy files or also made typo errors in his code. That’s why the amount of 404 errors is directly related to the type of environment. During development and test phases, it’s common to have more errors. On the other side, in a production environment, the amount of 404 errors should be limited and the main source of errors will be the client/browser.

The HTTP protocol has a list of response status codes to help communication between the server and the browser. Everytime a server responds to a browser request, a status code is sent. The most common ones are: “200” which means “Everything is ok, here is some food!” and “404” which means “Not found“. The second error may be caused by the client (example: an error in the URL typed in the browser) or by the developer/administrator who forgot to copy files or also made typo errors in his code. That’s why the amount of 404 errors is directly related to the type of environment. During development and test phases, it’s common to have more errors. On the other side, in a production environment, the amount of 404 errors should be limited and the main source of errors will be the client/browser.

Sometimes, “404” errors are considered useless by webmasters and are simply ignored in their reports. After all, their goal is to know how many visitors browsed to their websites. From a security perspective, those errors could be very helpful to detect unusual traffic targeting a web sites.

I analyzed one year of my blog logs (yes, I’ve a long retention policy!). Some facts to start:

- Total hits: 9.534.062

- 404 errors: 343.606 (3.6%)

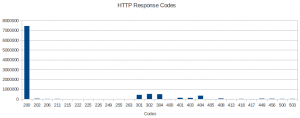

As you can see on the graph below, the 404 error code comes in the fifth position after the classic 200 and 3xx codes.

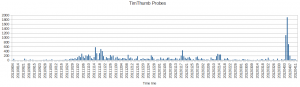

As I’m trying to keep the blog clean, this huge amount of “not found” errors looked strange to me. I decided to generate more statistics. What can we deduct? For a while, the big winner is the TimThumb vulnerability discovered in Augustus 2011. The exploit was released the 3rd of Augustus and the first attempt hit me on the 4th! Still today, I received plenty of probes (see this month):

The TimThumb scans are coming from three main sources as see on the Google map below (the live map is available here).

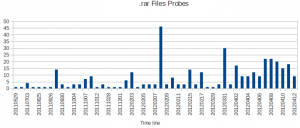

Another trend this month: more and more .rar archive files are tested. Especially this month. Why? I’ve absolutely no idea! If you have ideas, feel free to post your comments!

The top-10 of requested .rar files is:

- /mirserver.rar

- /web.rar

- /www.rar

- /mirserver1.rar

- /wwwroot.rar

- /youxi.rar

- /mh.rar

- /manhua.rar

- /mirserver2.rar

- /mirserver3.rar

Some of them look like performed by scanners which are looking for websites backups. But I did not see the same amount of requests for .tar.gz or .zip files! (Except for “www.zip“) I also saw request for files based on numbers: 5555.rar, 8888.rar, 444.rar, etc. Based on Google, those file are massively infected with malwares but why look for them on my server?

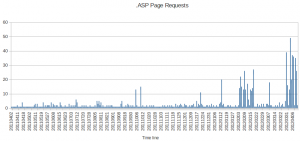

Finally, scanners are looking for .asp (Microsoft .Net) pages. Especially for the last two months:

The top-10 of requested .asp pages is:

- /save.asp

- /plug/save.asp

- /gmsave.asp

- /diy.asp

- /shell.asp

- /dama.asp

- /upfile_flash.asp

- /FCKeditor/editor/filemanager/connectors/asp/connector.asp

- /xiaoma.asp

- /up_BookPicPro.asp

And what about common tools or web interfaces? The top-10 is:

- /setup.php

- /scripts/setup.php

- /admin

- /login.php

- /phpmyadmin/

- /myadmin/

- /mysql/

- /db/

- /administrator/

- /db/

As you can see, there is plenty of useful information in your Apache (or any other webserver) log files! Keep an eye on your 404 errors to discover new trends! A temporary peak of 404 errors could mean that your server is under an attack…

Xavier,

Did you ever find the cause of the rar file requests? I have seen a similar trend (same file names) on my site and was just curious. Thanks.

Hi Blake,

No magic behind the stats! As you said, grep/cat/awk are our best friends. Data was exported in .csv files and loaded in OpenOffice!

How did you do you analysis? Just grep/cat/head/cut/sort? How did you build those graphs?